Track AI-generated code, tokens, lines, manual review rates, and bugs from Cursor, Codex, Claude, and more.

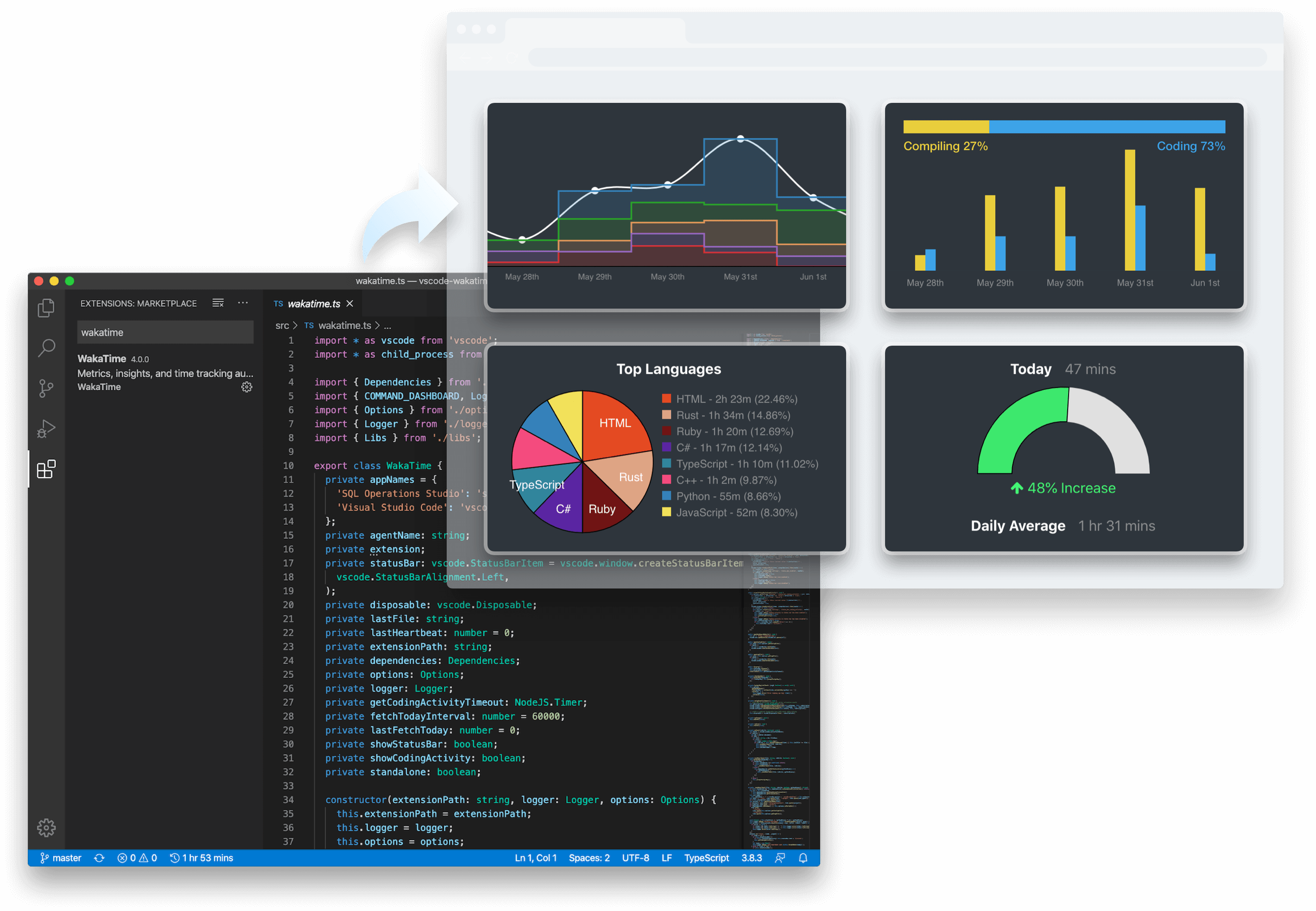

WakaTime turns AI coding activity into clear metrics for developers, teams, and company-wide decision making.

Track how much code is being produced with AI across repos, projects, developers, and teams.

See how often developers have to correct or rework AI-edited code to spot quality issues earlier.

Compare model efficiency with average tokens per line so usage and cost tradeoffs are easy to evaluate.

Understand how developers interact with AI tools by tracking average prompt length in characters over time.

See which AI tools actually help you ship faster, where they introduce rework, and how your habits are changing over time.

Understand adoption, output, and trends across the team without relying on anecdotes, surveys, or self-reported usage.

Evaluate AI tools with real usage data and make smarter decisions about how your company adopts AI dev tools.