Automatically track AI-generated code, model efficiency, and how tools like Cursor or Claude impact your team’s velocity.

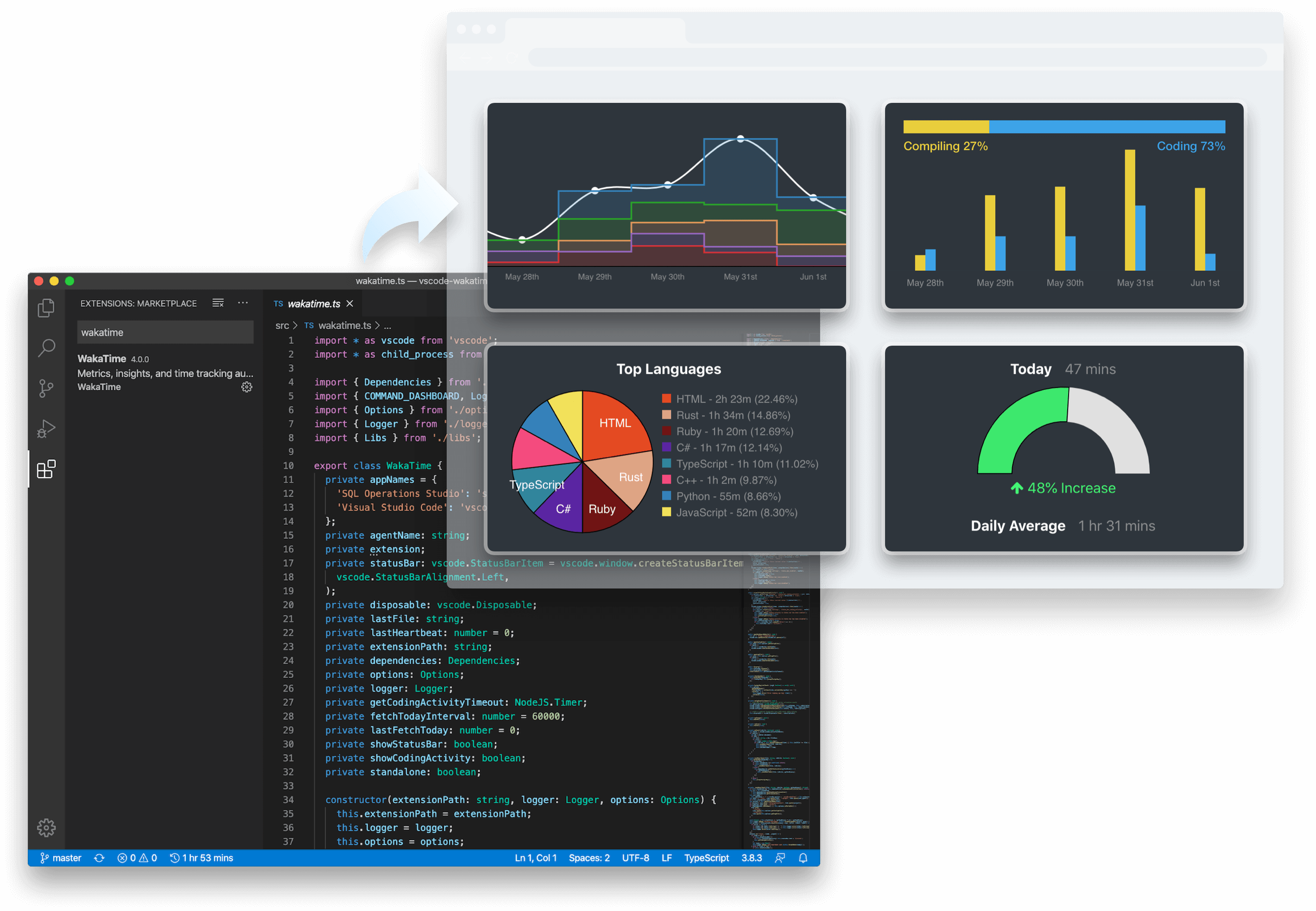

WakaTime turns AI coding activity into clear metrics for developers, teams, and company-wide decision making.

Track how much code is being produced with AI across repos, projects, developers, and teams.

Track how often AI-generated code requires manual fixes to identify quality bottlenecks and model hallucinations.

Compare average tokens per line across models to balance output quality against API costs.

Understand how developers interact with AI tools by measuring the amount of input and context they give AI.

See which AI tools actually help you ship faster, where they introduce rework, and how your habits are changing over time.

Understand adoption, output, and trends across the team without relying on anecdotes, surveys, or self-reported usage.

Justify your AI spend with objective data. Evaluate tool adoption and ROI to build a data-driven AI integration strategy.